Every decade or so we have to debate the desirability of adopting national standards for education. People tend to be in favor of them when they imagine that they are the ones writing the standards. But when everyone gets into the sausage-making that characterizes policy formulation, it generally becomes clear that no one is going to get what they want out of national standards. What’s worse is that the resulting mess would be imposed on everyone. There’d be no more laboratory of the states, just uniform banality.

Of course, some people always hope that they’ll somehow manage to sneak their preferred vision into place without having to go through the meat grinder. That’s what is happening now with the National Governor’s Association effort at “voluntary” national standards. In a process completely lacking in transparency and open-debate, some are rushing to announce a national standards fait accompli.

My colleague Sandra Stotsky tells us what’s what:

“If another country wanted other countries to respect its educational system and the reforms it was trying to make, who would it choose to lead such an important professional project as the development of its national standards in mathematics and in the language of its educational system itself? In any other country in the world, one would expect a distinguished mathematician at the college level to be asked to chair the mathematics standards-writing committee–someone who commands the respect of the mathematics profession (and obviously is an expert on mathematics). For the language standards-writing committee, one would likewise expect an eminent scholar in a college-level department–someone whose command of the language and understanding of the texts that inform the development of this language could not be questioned. If the National Governors Association and the Council of Chief State School Officers had thought about national pride (and national need) as well as academic/educational expertise, then all of us would respect the Common Core Initiative and look forward with eagerness to the drafts the NGA and CCSSO have promised to make public in July.

These two organizations could have followed, for example, the exemplary procedures followed by the National Mathematics Advisory Panel, on which I had the privilege to serve. The Panel was chaired by the former president of one of the major universities in the country, all Panel members were identified at the outset, their qualifications were made known to the pubic, their procedures were open to the public and taped as well, and the final product was hammered out in public, after dozens of reviewers provided critical comments.

But instead of choosing nationally known scholars to chair and staff these committees–to assure us of the integrity and quality of the product–the NGA and the CCSSO have, for reasons best known to themselves, treated the initiative as a private game of their own. The NGA and the CCSSO haven’t even bothered to inform the public who is chairing these committees, who is on them, why they were chosen, what their credentials are, and why we should have any confidence whatsoever in what they come up with.

One person has announced on his own to the press and to a state department of education that he is chairing the mathematics standards-writing committee. He has not been contradicted by anyone at NGA or CCSSO, so we must assume he’s for real. It turns out he is an English major with no academic degrees in mathematics whatsoever. No one has yet announced on his/her own that he/she is chairing the English standards-writing committee. One wag has already wondered whether this person might be a mathematics major with no academic degrees in English. But it’s possible the sad joke in mathematics is not being repeated in English.

This country deserved better for a project of such national importance.”

Sandy Kress added these words of wisdom (pardon the capitalization since this was a comment on a post at Eduwonk):

“i suspect after the good feelings wear off, other governors and chiefs will begin to ask whether they can or should consider new standards at this time. once they learn about how hard it is to write new standards, they will ask even more questions. when we get to the controversies around whole language vs. phonics, they will ask more questions still. then comes computation vs. concepts. then comes all the many questions that arise once you get below the level of 30,000 feet. then – God forbid – you might even get to the place where you might possibly find the new standards under consideration to be no better than (or even possibly worse than) the standards you have! could it be that the tradeoffs that happen nationally will be the same as those that occur in the states? could the same interest groups intervene? could this nice dream be interrupted by the demons that bedevil state standard setting? could these interests be the problem as much as variation? oh no, could it be there’s no santa… no, i won’t go there.

and, oh yes, what about performance standards? if we ever get to detailed precise standards in each grade for reading and math, do the participants agree to common performance standards? if they don’t, who’s kidding whom? the real problem today is not so much that some states have vastly higher standards than other states; it’s more that their performance standards are greatly different. have the states, or will the states, commit to making those the same? if not, this will be utterly fruitless.

listen – DO NOT GET ME WRONG – i’m all for higher, fewer, clearer standards. i’ve spent a lot of time working on improving texas’ standards over the past 20 years. i’ve spent a lot of time with the hunt institute pushing more common standards. this is indeed the right thing to do.

but this process is going to be much more difficult than some think. it won’t happen overnight, nor should it. and there will remain great variation at the end of the day. it is utterly naiive and/or foolish to expect states to jump track from their current gameplans, particularly where they’re reasonably well thought out.

be prepared for states to recognize this “the morning after.” texas just recognized it before “the drinking began.”

also be prepared to realize that a better approach might be for one or more of these organizations to begin by recruiting the best and the brightest and actually doing the hard work of developing a few sets of model standards and then shopping them to the states, with the political support of those who rightly want high, common standards as well as perhaps some incentives from the feds to take these steps.”

(edited for typos)

Posted by Jay P. Greene

Posted by Jay P. Greene

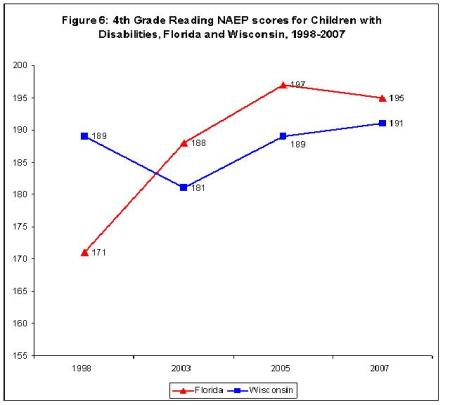

One finds the same pattern among children with disabilities. In 1998, Wisconsin students with disabilities scored 18 points higher than those in Florida. In 2007, it was 4 points lower.

One finds the same pattern among children with disabilities. In 1998, Wisconsin students with disabilities scored 18 points higher than those in Florida. In 2007, it was 4 points lower.

(Guest Post by Matthew Ladner)

(Guest Post by Matthew Ladner)