(Guest Post by Matthew Ladner)

Too much of Arizona’s K-12 debate focuses on inputs, too little on outputs. Some districts have been gaining enrollment, others losing enrollment, but this is an entirely secondary concern when compared to the question of whether an increasing number of Arizona students are acquiring the knowledge, skills and habits for success in life.

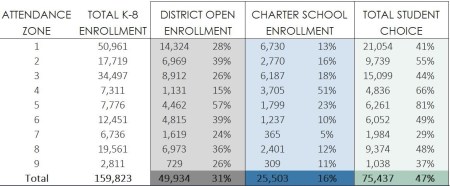

The above chart from the Center for Student Achievement shows enrollment trends for districts and charters in a number of Arizona districts. Some districts gained students despite the rise of charters (Chandler, Higley, Queen Creek) while others lost enrollment. All of the above Arizona district/charter combos did well to spectacular in Stanford’s Sean F. Reardon’s measurement of academic growth, with the lone exception of Coolidge Unified.

The Scottsdale district/charter combo came in at the 64th percentile, Tucson at the 67th, Queen Creek at the 68th percentile, Deer Valley at the 83rd, Roosevelt at the 89th. All three of the truly spectacular scores (Higley, Chandler and Phoenix Elementary combos at 95, 95 and 99th percentile respectively) came from situations where both the district and charter sectors grew rapidly. Congratulations to the students and educators of these communities are richly deserved.

The growth party did not stop in 2015. Here are the ELA proficiency trends in AZMerit for all of these districts:

and here is the same chart for math:

and here is the same chart for math:

Some of these gains are large (see Queen Creek and Scottsdale) others incremental, but every single one of them is moving in the right direction.

Wait- my telepathic powers are picking something up. You were thinking “Ladner are you really going to celebrate Roosevelt going from 17% proficient to 23%?”

I’m glad you asked.

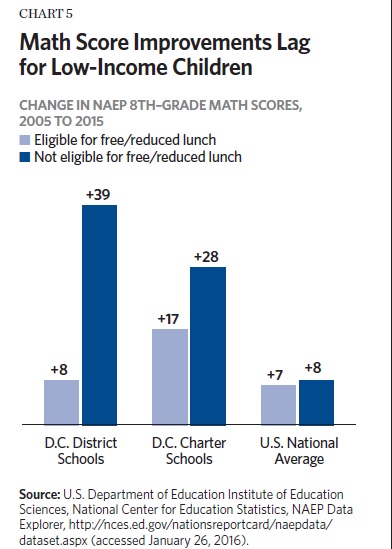

Two things- first the AZMerit academic bar is high, and second most of the rest of the country seems mired in academic stagnation. Of course I’m not satisfied with 23% proficiency (#NoAZwe’vegottoWinMOAAARRR!) but I am indeed happy that both low performing and high performing districts show improvement.

Experience is a harsh mistress, and one of the things she teaches the policy analyst is to never rely solely on state test scores. NAEP will release 2017 scores in a few weeks. Let’s see what happens next. In the meantime, the freedom for families to choose between schools and the opportunity for Arizona educators to create new schools according to their vision of excellence seems to be broadly working.

Posted by matthewladner

Posted by matthewladner