Miss Indiana crowned as Miss America

(Guest Post by Matthew Ladner)

Todd Ziebarth from the National Alliance for Public Charter Schools has responded to criticism from yours truly, Max Eden and others regarding the soundness of judging charter school laws based on adherence to a model bill, rather than by their results. I encourage you to read Todd’s response.

Ziebarth in essence claims that facts on the ground in the last five laws passed rather than flaws in the laws themselves have dampened the impact of otherwise good laws. I have no reason to doubt that differences in circumstances from state to state will influence speed out of the gate. I however do not share Ziebarth’s preference for ranking charter laws by their adherence to a model bill when it is possible to judge them by their results, like the Brookings Institute did in this map:

This map measures the percentage of students by state who have access to a charter school in their zip code. It’s not a perfect measure- after all some zip codes have multiple charter schools. Perhaps the measure could be improved upon. When however you see states with near zero percentages on this map near the top of a ranking list, something seems out of sorts with the rankings. Yes circumstances can influence how well you come out of the gate, five new laws in a row failing to produce many schools isn’t a fluke, it looks more like a pattern.

Ziebarth notes that if we don’t include the recent charter bills that have yet to produce many charters, then you get a list like (each state listed along with the % of charter students). This revised list however remains problematic.

| 1 | Indiana | 4% |

| 2 | Colorado | 13% |

| 3 | Minnesota | 6% |

| 4 | District of Columbia | 46% |

| 5 | Florida | 10% |

| 6 | Nevada | 8% |

| 7 | Louisiana | 11% |

| 8 | Massachusetts | 4% |

| 9 | New York | 5% |

| 10 | Arizona | 17% |

Ok, so the top rated law (Indiana) only produced charter schools within the zip codes of 19.5% of Indiana students, and enrolls 4% of the student population. The law has been in operation for a long time, but you as yet cannot even get a NAEP score for their schools because of the wee-tiny size of the population. If one is a utilitarian sort, any set of criteria that ranks Indiana as having the top charter school law seems in need of revision.

Minnesota has the oldest of all charter school laws, but only six percent of the kids, and 37.7% of kids having access to a charter in their zip code for a law that passed in 1991. There is a word for that: contained. Minnesota gets a ton of credit for inventing charter schools, but their law doesn’t seem to be doing a whole lot to provide families with opportunities, or producing competitive pressure to shake things up.

DC meanwhile has 46% of total kids and 87% of kids have access to a charter school in their zip code. It’s also easy to find evidence of academic success for DC charters. Judging by results, this certainly looks like a much better charter law than Indiana or Minnesota. Ironically, the main reason NAPCS dings the DC charter law in their scoring metric is for a lack of equitable funding. DC charters however seem to be funded at a high enough level to capture 46% of the market, to provide access to 87% of kids, and to produce better results than DCPS. They also receive more generous funding per pupil than most (all?) states. There is no contest between DC and either Indiana or Minnesota in terms of outcomes in my book.

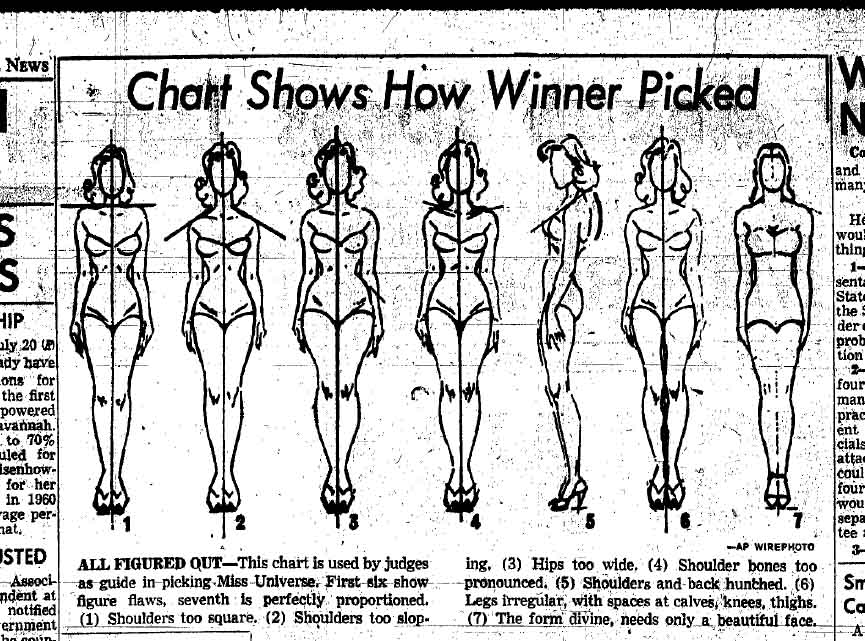

Ok I could go on but I think the horse is dead. We’ve reached the point where it is possible to judge charter sectors by outcomes, rather than by a model bill beauty pageant criteria.

In the end charter school laws either produce seats or they don’t. Laws that fail to produce seats are failures. Laws that produce only a few seats are disappointments. Philanthropists should carefully reexamine their grant metrics to guard against the possibility that they have created a powerful incentive for groups to seek the passage of charter laws regardless of whether they ever produce many charter seats. I haven’t seen grant agreements, but I have watched as the last five laws failed to produce many schools. We are supposed to be creating meaningful opportunity for kids rather than merely colored maps.

Posted by matthewladner

Posted by matthewladner